Winter 2008

The Brain: A Mindless Obsession

– Charles Barber

Despite stunning advances in neuroscience and bold claims of revelations from new brain-scan technologies, our knowledge about the brain’s role in human behavior is still primitive.

A team of American researchers attracted national attention last year when they announced results of a study that, they said, reveal key factors that will influence how swing voters cast their ballots in the upcoming presidential election. The researchers didn’t gain these miraculous insights by polling their subjects. They scanned their brains. Theirs was just the latest in a lengthening skein of studies that use new brain-scan technology to plumb the mysteries of the American political mind. But politics is just the beginning. It’s hard to pick up a newspaper without reading some newly minted neuroscientific explanation for complex human phenomena, from schizophrenia to substance abuse to homosexuality.

The new neuroscience has emerged from the last two decades of formidable progress in brain science, psychopharmacology, and brain imaging, bringing together research related to the human nervous system in fields as diverse as genetics and computer science. It has flowered into one of the hottest fields in academia, where almost anything “neuro” now generates excitement, along with neologisms—neuroeconomics, neurophilosophy, neuromarketing. The torrent of money flowing into the field can only be described in superlatives—hundreds of millions of dollars for efforts such as Princeton’s Center for the Study of Brain, Mind, and Behavior and MIT’s McGovern Institute for Brain Research.

Psychiatrists have been in the forefront of the transformation, eagerly shrugging off the vestiges of “talk therapy” for the bold new paradigms of neuroscience. By the late 1980s, academic psychiatrists were beginning literally to reinvent parts of the discipline, hanging out new signs saying Department of Neuropsychiatry in some medical schools. A similar transformation has occurred in academic psychology.

A layperson leafing through a mainstream psychiatric journal today might easily conclude that biologists had taken over the profession. “Acute Stress and Nicotine Cues Interact to Unveil Locomotor Arousal and Activity-Dependent Gene Expression in the Prefrontal Cortex” is the title of a typical offering. The field has so thoroughly cast its lot with biology, and with the biology induced by psychoactive drugs, that psychiatrists can hardly hope to publish in one of the mainstream journals if their article tells the story of an individual patient, or includes any personal thoughts or feelings about the people or the work that patient was engaged with, or fails to include a large dose of statistical data. Psychiatry used to be all theories, urges, and ids. Now it’s all genes, receptors, and neurotransmitters.

As a result of these changes, the field, once seen as the province of woolly-headed eccentrics, has gained a new public image. Psychiatry is now seen as a solid branch of medicine, a bona fide science built on white-coated certitude. It has joined Big Science. The completion of the Human Genome Project in 2003 contributed to the growing popular belief that psychiatric disorders proceed in neat Mendelian inheritable patterns, and that psychiatrists are starting to methodically unlock these patterns’ mysteries. But if anything has been gleaned from the last two decades of work in the genetics of psychiatric disorders, it is that the origins of these maladies are terribly complex. No individual gene for a psychiatric disorder has been found, and none likely will ever be. Psychiatric disorders are almost certainly the product of an infinitely complex dialogue between genes and the environment.

Nevertheless, earlier paradigms in academic psychology and psychiatry—“soft” disciplines such as old-fashioned psychoanalysis and behaviorism and psychotherapy—have been chucked aside like so many rotting vegetables. Ironically, this shift—which is terribly premature—is occurring even as psychotherapy is rapidly improving. Psychiatry used to be brainless, it’s said by some in the field, and now it’s mindless.

Sea changes such as the advent of biopsychiatry are not unusual in the history of American psychiatry. In fact, they have been common. One paradigm replaces another, and each one is embraced with certainty and passion. Only in hindsight are the revolutions questioned and discredited.

Fifty years ago, psychoanalysis enjoyed the same prestige and influence that biopsychiatry does today. In 1959, during the heyday of psychoanalysis, the sociologist Philip Rieff observed that “in America today, Freud’s intellectual influence is greater than that of any other modern thinker. He presides over the mass media, the college classroom, the chatter at parties, the playgrounds of the middle classes.” The literary critic Lionel Trilling, in 1947, called Freud’s thought “the only systematic account of the human mind, which, in point of subtlety and complexity, of interest and tragic power, deserves to stand beside the chaotic mass of psychological insights which literature has accumulated through the centuries.” Today, of course, psychoanalysis is largely a cultural afterthought for all but a few wealthy acolytes.

The history of American psychiatry can be divided into three overlapping eras: Asylum Psychiatry, Community Psychiatry, and today’s Corporate Psychiatry. In its improbable odyssey, psychiatry has gone from the back wards of hospitals to the boardrooms of corporations, from invisible to virtually omnipresent. As the psychiatrist and author Jonathan Metzl has pointed out, for its first century at least, psychiatry dealt with what were considered obscure mental processes and was conducted in the shadows. Now it is everywhere—in the movies, in advertisements, on television shows, and, most significantly, in our bloodstreams.

Asylum Psychiatry was born around the beginning of the 19th century with the founding of a number of institutions for the mentally ill, such as Maryland’s Spring Grove State Hospital. By 1904 there were 150,000 patients in U.S. psychiatric hospitals, and by midcentury the asylum population peaked at more than a million. Asylum Psychiatry followed two tracks—one perfectly well intentioned and generally benign, the other horrific. The initial impetus was to provide retreats, often in sylvan settings, where, in the absence of any actual evidence-based treatments, patients could at least be left alone in a tranquil setting. But there was an equally long tradition in the asylums of providing (or imposing) the most wretched treatments imaginable. What Daniel Defoe wrote in 1728 has been echoed many times since: “If they are not mad when they go to these cursed Houses, they are soon made so by barbarous Usage they there suffer. . . . Is it not enough to make anyone mad to be suddenly clap’d up, stripp’d, whipp’d, ill fed and worse. . . ?”

In his 1948 book The Shame of the States, journalist Albert Deutsch compared state mental hospitals with Nazi concentration camps, their “buildings swarming with naked humans herded like cattle and treated with less concern, pervaded by a fetid odor so heavy, so nauseating, that the stench seemed to have almost a physical existence of its own.” Not uncommonly, patients were sterilized so as to permanently halt the moral contagion of their illness. Editorials in The New York Times and The New England Journal of Medicine endorsed the practice. By 1945 some 45,000 Americans had been sterilized, almost half of them psychiatric patients in state facilities.

In 1916, Dr. Henry Cotton of Trenton State Hospital, believing that germs from tooth decay led to insanity, removed patient’s teeth and other body parts, such as the bowels, which he thought might be the causes of their madness. He killed almost half the patients who received his “thorough” treatment, more than 100 people. Cotton’s practices were covered up by the hospital board and the leading figure in American psychiatry of the day, Adolf Meyer, and Cotton was allowed to continue practicing at the hospital for nearly 20 more years. In a eulogy for Cotton in 1933, Meyer lauded his “extraordinary record of achievement.”

Two years later, the Portuguese neurologist Egas Moniz performed the first lobotomy, or what he called a “leucotomy” (white cut). Moniz had failed to win a Nobel Prize for his earlier brain research and was eager to make a splash. After hearing a lecture in which the speaker conjectured that the prefrontal cortex was the site of psychopathology, he decided to try out a method of destroying that part of the brain in his patients. One of the brutalized subjects of these experiments repaid Moniz in 1939 by shooting him, leaving him partially paralyzed. None-theless, Moniz’s efforts were rewarded with the Nobel Prize in Physiology or Medicine in 1949.

The American champion of the lobotomy, Walter Freeman, roamed the country as a veritable Johnny Appleseed of the technique, to which he added his own refinements, which amounted to jamming an ice pick through the patient’s eye sockets and destroying the frontal lobes. A successful operation, in Freeman’s view, was one in which the patient became adjusted at “the level of a domestic invalid or household pet.” Between 1935 and 1950 some 20,000 American psychiatric patients were subjected to lobotomies, or, as the procedure was more gently called, “psychosurgery.”

What finally ended the lobotomy era was not any newfound compassion or enlightenment, but the emergence of antipsychotic drugs that made psychosurgery “redundant.” The groundwork of the new Community Psychiatry had been laid when the psychiatric profession took its first, tentative steps from universities and hospitals into office practice between the world wars, led by émigré European psychoanalysts who established themselves in prestigious private practices, mainly in the big cities of the East. Especially after World War II, American-born psychiatrists rapidly abandoned their bases in universities and hospitals for private practice in order to serve the cash-carrying middle and upper classes. (Psychiatrists are medical doctors with a specialization in psychiatry; psychoanalysts may have either an M.D. or, thanks to relatively recent rule changes, a Ph.D., in addition to psychoanalytic training.) By 1955, more than 80 percent of American psychiatrists were working in private practice. At the time, neither group was very large—there were only 1,400 psychoanalysts in the world in 1957, and a somewhat larger number of psychiatrists in the United States alone. (Today, there are some 45,000 psychiatrists and more than 3,500 psychoanalysts in the United States.) But the influence of the two groups was profound, greatly amplified by legions of social workers, assorted therapists, and popular culture (see sidebar on page 38).

As with Asylum Psychiatry, there have been two prongs of Community Psychiatry. One has proved a great success, the other a national disgrace. For the “worried well,” the 1960s through the ’90s saw an explosion in the number of non–psychiatrist therapists (social workers, clinical psychologists, addiction counselors), who have treated an ever-expanding proportion of the population. By the early 1980s, one in 10 Americans was being treated for mental problems.

Therapists treated an ever-expanding proportion of the population: By the early 1980s, one in 10 Americans was being treated for mental problems.

Community Psychiatry for the seriously mentally ill began with the introduction of Thorazine in the 1950s, which led relatively quickly to the mass depopulation of the asylums. At first, “deinstitutionalization” was thought to be a wonderful thing. By giving their patients a medication that appeared to work and then sending them on their way, biologically minded psychiatrists thought they were setting patients free. Those with an activist bent saw the release of patients into the community as an act of liberation from the oppressive institutions and hierarchies of medical care. State governments were only too happy to divest themselves of the bad karma and expense of massive networks of long-term care facilities.

For all the high expectations and lofty rhetoric, the reality was that the effectiveness of the drugs was overestimated and the necessity of appropriate community support for patients was underestimated or ignored. The goal of John F. Kennedy’s 1963 Community Mental Health Act to create a national network of outpatient clinics proved too ambitious. The clinics that were opened were quickly co-opted for therapy sessions for the middle-class worried well, and funding withered during the prosecution of the Vietnam War. Kennedy’s death, too, certainly played a part. He was an early advocate of community treatment, influenced no doubt by the experience of his sister Rosemary, who was developmentally disabled and mentally ill, and who herself had been subjected to a lobotomy.

Eventually there was simply no place for patients to go but the parks, the bus stations, the public libraries, the emergency rooms, and the homeless shelters. Deinstitutionalization coincided with the arrival of AIDS and the emergence of crack cocaine in the early 1980s, and the numbers of the homeless mentally ill rose dramatically across the country.

Today, state hospitals house only about five percent as many patients as they did at their peak. Community Psychiatry is being eroded by managed care and the national obsession with psychiatric medications instead of therapy. The new, biologically driven Corporate Psychiatry, with its blockbuster products and its hi-tech glow, is where the juice is now.

And today’s psychiatry really is corporate. A large proportion, arguably the largest portion, of the major pharmaceutical companies’ extraordinary profits in recent decades has come from psychiatric drugs. The medical historian Carl Elliott has written that antidepressants were one of the most profitable products in the most profitable industry in the world over the course of the 1990s. The first tremors of Corporate Psychiatry were felt in the late 1960s and the ’70s, when Valium became the top-selling drug in America, and the earthquake began in 1988 with the introduction of Prozac, which eventually became one of the best-selling drugs in history. Antidepressants are now the sixth-best-selling category of drugs in the world, and antipsychotics the seventh. By 2002, more than 11 percent of American women and five percent of American men were taking antidepressants, or about 25 million people. And the use of antidepressants, despite bad press and black-box warnings indicating the resultant risk of suicidal thoughts in young people, has only increased in recent years. Counting the multiple and serial prescriptions often issued to patients, along with renewals, some 227 million antidepressant prescriptions were dispensed in the United States in 2006.

Two developments were at the heart of the revolution that has brought us the biologically based Corporate Psychiatry—the discovery of drugs that actually work, at least for some people, and the rise of brain imaging.

Thorazine was the first drug to work. Its invention has been called one of the seminal events in human history, and it was the beginning of the revolution in psychiatry, comparable in its importance to the introduction of penicillin in general medicine. Like many other significant drugs, it was discovered by accident, and when it worked, no one had any idea why. In 1952, Henri Laborit, a French surgeon, was looking for a way to reduce surgical shock in patients. Much of the shock came from anesthesia; Laborit reasoned that if he could use less anesthetic, patients could recover more quickly. Casting about for a solution, he tried Thorazine, a shelved medication that had been developed to fight allergies. Laborit noticed an immediate change in his patients’ mental state. They became relaxed and seemingly indifferent to the surgery awaiting them. Laborit thought Thorazine might be helpful to psychiatric patients, but at that time “no one in their right mind in psychiatry was working with drugs. You used shock or various psychotherapies,” says psychiatrist Heinz Lehmann, Thorazine’s first champion in North America.

The psychiatrist Pierre Deniker heard about Thorazine from his brother-in-law, a colleague of Laborit’s, and Deniker tried it on his most agitated, uncontrollable patients in the recesses of a Parisian psychiatric hospital. This was a startlingly novel idea. “Those cases were in the back wards and that was it. The notion you could ever do anything about [them] had never occurred to anyone,” said John Young, an executive at the drug company that later bought the rights to Thorazine (and first put it on the market as an antivomiting treatment). Another French doctor, Jean Perrin, gave Thorazine to a barber from Lyon who had been hospitalized for years and was unresponsive to any intervention. The barber promptly awoke and declared that he knew who and where he was, and that he wanted to go home and get back to work. Perrin hid his shock and asked the patient to give him a shave, which he did, perfectly. Another patient, suffering from catatonic schizophrenia, had been frozen in various postures for years. He responded to the drug in one day. Within 24 hours, he was greeting the staff by name and asking for billiard balls to juggle.

After Deniker and others got over their initial shock and enthusiasm, it became clearer what antipsychotic drugs can do—and what they can’t. In no fashion do they cure the illness, but for many, if not most, people with psychotic disorders such as schizophrenia, they do help to make the condition eminently more tolerable. In many cases the medications, quite literally, lower the volume. Many patients have told me that the drugs dampen the volume of the voices that plague them, reducing the screams and rants to faint echoes, and occasionally drowning them out entirely. Psychiatrists compare the way in which such drugs help, when they are effective, to how insulin works for people with diabetes: Although far from being a cure, they do help the majority of patients manage, and allow them, for the most part, to function, or function better. Or, as Scientific American more clinically put it, “Antipsychotics stop all symptoms in only about 20 percent of patients. . . . Two-thirds gain some relief from antipsychotics yet remain symptomatic . . . and the remainder show no significant response.”

What also became evident over time were the incredibly harsh side effects of the first antipsychotics: involuntary muscle movements, endless pacing (or the “Thorazine shuffle,” as it became known), and, for some, a horrible restlessness, the feeling of needing to crawl out of one’s skin. Later antipsychotics, though they generally have a better side-effect profile, still can lead to major problems, such as massive weight gain and high cholesterol. Patients vote with their feet on the tradeoff between the positive and negative effects of these drugs. In a massive “real world” study published in 2006, three-quarters of those given antipsychotic drugs stopped taking them by the end of the study’s 18 months.

Almost as soon as Thorazine became available, psychiatric hospitals in the United States gave it to nearly all their patients, and it was widely prescribed for various uses outside hospital walls. From 1954, when Thorazine was approved by the U.S. Food and Drug Administration, through 1964, 50 million people took the drug.

If Thorazine started the revolution in psychiatry, brain imaging finished it. While brain imaging has its origins with computerized tomography (CT) in the 1960s, its most spectacular contributions have occurred in the past 15 years. Even when CT scans did reveal startling images in the 1970s, the results were received with doubt. A landmark 1976 study that showed that the brains of people with schizophrenia had much larger ventricles than “normals” did was met with skepticism, as schizophrenia was assumed to be a psychological disease.

As George H. W. Bush’s 1990 presidential proclamation announcing “The Decade of the Brain” explained, three things happened simultaneously in the 1980s that set up the miraculous pictures to come: Technologies such as positron-emission tomography (PET) and magnetic resonance imaging (MRI) allowed researchers, for the first time, to observe the living brain; computer technology reached a level of power and sophistication sufficient to handle neuroscience data in a manner that reflected actual brain function; and discoveries at the molecular and cellular levels of the brain shed greater light on how neurophysiological events translate into behavior, thought, and emotion.

Almost as soon as Thorazine became available, psychiatric hospitals in the United States gave it to nearly all their patients.

The first brain-imaging technologies, CT scans and MRI, could image brain structure: what the brain would look like if you could take it out of the skull and place it on a table. MRI had the advantage of producing better-quality images without requiring the use of ionizing radiation in the brain, as CT scans do. The resolution of MRI is superb—it yields “slices” of brain that look like they were obtained in a postmortem pathology lab. PET and SPECT (single photon emission computed tomography) scans, which came later, provide an image of brain activity—or function, by measuring blood flow in the brain as a reflection of brain activity. PET actually shows how neuroreceptors live in the brain—allowing one to see the distribution and number of receptors in particular areas of the brain, the concentration of neurotransmitters at the synapse, and the affinity of a receptor for a particular drug. PET specifically measures glucose metabolism, an indicator of which parts of the brain are using the most energy, which allows neuroscientists to undertake the process of mapping the neural basis of thought and emotion in the living brain.

The most spectacular technology of all, fMRI—or functional magnetic resonance imaging—burst on the scene in the early 1990s. Unique in that it is able to provide images of both structure and function, fMRI produces not just slices of the brain but what are, in effect, extremely high-resolution movies of what the brain looks like when it is working. By measuring blood flow, which is an indicator of brain activity, fMRI reveals which parts of the brain are being used most actively during a given task. That permits observation of the brain while it is actually functioning as a mind—thinking, remembering, seeing, hearing, imagining, experiencing pleasure or pain.

Unlike earlier technologies, fMRI requires a very short total scan time (one to two minutes), and it is entirely noninvasive and extraordinarily comprehensive: It can measure brain responses at 100,000 locations. Of the wonders of brain imaging, and in particular fMRI, the leading neuropsychologist Steven Pinker has written exuberantly, “Every facet of mind, from mental images to the moral sense, from mundane memories to acts of genius, has been tied to tracts of neural real estate. Using fMRI . . . scientists can tell whether the owner of the brain is imagining a face or a place. They can knock out a gene and prevent a mouse from learning, or insert extra copies and make it learn better.”

While the sudden visibility of the brain is indeed remarkable, the greater significance is perhaps more symbolic. Brain images are still far cruder than one would think after reading the sensational revelations attributed to them in the science pages of newspapers and magazines. And it must be remembered that these are secondary images of blood flow and glucose in the brain, and not of brain tissue itself. We seem to forget that it is not as if a camera were entering the brain and taking pictures of what is going on. At this point, the most that can be said is that brain imaging indirectly and very broadly measures the activity of groups of thousands of neurons when the brain is engaged in a physical or mental task. While there are some correlations between brain activity in certain regions and external, observable behavior, it is very hard to gauge what the pictures really mean. How does the flow of blood in parts of the brain correspond to feelings, moods, opinions, emotions, imagination? It remains a daunting task to create theories to “operationalize” what is going on underneath all the pretty pictures.

The state of the art right now is that we can read brains—to some very crude extent—but we can’t even begin to read minds. Wall Street Journal science writer Sharon Begley has coined the term “cognitive paparazzi” to describe those who claim they can. “What does neuroscience know about how the brain makes decisions? Basically nothing,” says Michael Gazzaniga, director of the SAGE Center for the Study of the Mind at the University of California, Santa Barbara.

Another limitation of contemporary neuroscience, Gazzaniga says, is that many brain imaging studies are based on averages of the scans of many patients. “The problem is if you go back to the individual scans, you will see wide variation in the part of the brain that’s activated.” And if you were to do the same scans of the same activity a year later, you might get quite different results.

“The community of scientists was excessively optimistic about how quickly imaging would have an impact on psychiatry,” says Steven Hyman, a professor of neurobiology and provost at Harvard as well as former director of the National Institute of Mental Health. “In their enthusiasm, people forgot that the human brain is the most complex object in the history of human inquiry, and it’s not at all easy to see what’s going wrong.”

There are currently no standard ways of treating or assessing mental illness based on brain images. The only unequivocal clinical use of imaging is in detecting raw abnormalities. “The only thing imaging can tell you is whether you have a brain tumor or some other gross neurological damage,” says Paul Root Wolpe of the University of Pennsylvania’s Center for Bioethics. The unfortunate fact remains that the most accurate way of gauging the thoughts and feelings of others is simply by asking them what they are thinking and feeling.

Steven Pinker, again: “We are still clueless about how the brain represents the content of our thoughts and feelings. Yes, we may know where jealousy happens—or visual images or spoken words—but ‘where’ is not the same as ‘how.’”

The three-to-four-pound human brain is the most complicated object in the universe.

Nevertheless, the smashing victory of biological psychiatry was almost universally endorsed by the end of the 1990s. David Satcher, U.S. surgeon general, declared in 1999, “The bases of mental illness are chemical changes in the brain. . . . There’s no longer any justification for the distinction . . . between ‘mind and body’ or ‘mental and physical illnesses.’ Mental illnesses are physical illnesses.” Nobel laureate Francis Crick put it more directly: “‘You,’ your joys and your sorrows, your memories and your ambitions, your sense of personal identity and free will, are in fact no more than the behavior of a vast assembly of nerve cells and their associated molecules. As Lewis Carroll’s Alice might have phrased it: ‘You’re nothing but a pack of neurons.’”

The ultimate indicator of our newfound faith in scientific psychiatry may be the mysterious growth of the placebo effect in tests of the drugs the new psychiatry dispenses. When Columbia University psychiatrist B. Timothy Walsh analyzed 75 trials of antidepressants conducted between 1981 and 2000, he discovered that the rate of response to placebos, which are, of course, nothing more than sugar pills, increased by about seven percent per decade. Simply because people thought they were taking the all-powerful medicines, they thought they were getting better.

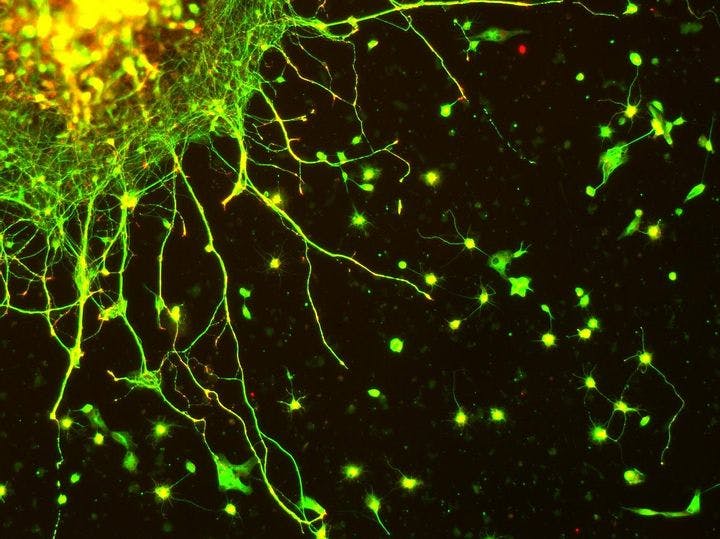

All of the evidence points to the conclusion that today’s full embrace of biological psychiatry is terribly premature, especially since we have available an increasing number of nondrug therapies of proven effectiveness. We are only in the very early stages of understanding how the brain works and what alters its functioning. Somewhere along the way we seem to have misplaced the notion that, at this stage of our scientific evolution at least, the brain’s capacity to understand itself is minimal. The task is endlessly daunting. There are, for example, more than 100 billion neurons in the human brain. Each neuron is connected to hundreds of thousands of other neurons, and each can fire electrical and neurochemical messages hundreds of times a second to other neurons across synapses. Altogether, there are 100 trillion synapses through which these signals flow. All of this activity happens within the confines of a three-to-four-pound object. And the brain is not even mainly composed of neurons. Ninety percent of the cells in the brain are not neurons but glial cells, which provide nutrition and protection to the neurons.

The brain is the most complicated object in the universe. Nobel Prize–winning psychiatrist Eric Kandel has written, “In fact, we are only beginning to understand the simplest mental functions in biological terms; we are far from having a realistic neurobiology of clinical syndromes.” Neuroscientist Torsten Wiesel, another Nobelist, scoffed at the hubris of calling the 1990s “The Decade of the Brain.” “We need at least a century, maybe even a millennium,” he said, to comprehend the brain.

“We still don’t understand how C. elegans works,” Wiesel said, referring to a small worm often used by scientists to study molecular and cell biology. In my own travels in the world of neuroresearch, I have consistently found that the elite scientists are surprisingly modest about how much we know about the brain, despite the spectacular progress in recent decades. It is the midlevel scientists who are prone to making large claims.

To this day, no one knows exactly how psychoactive drugs work. The etiology of depression remains an enduring scientific mystery, with entirely new ways of understanding the disease—or diseases, since what we think of as “depression” now is probably dozens of discrete disease entities—constantly emerging. Indeed, the basic tenet of biological psychiatry, that depression is a result of a deficit in serotonin, has proven to be one that was too eagerly embraced. When this “monoamine” theory of depression emerged in the 1960s, it gave the biologically minded practitioners of psychiatry what they had long been craving—a clean, decisive scientific theory to help bring the field in line with the rest of medicine. For patients, too, the serotonin hypothesis was enormously appealing. It not only provided the soothing clarity of a physical explanation for their maladies, it absolved them of responsibility for their illness, and to some degree, their behavior. Because, after all, who’s responsible for a chemical imbalance?

Unfortunately, from the very start there was a massive contradiction at the heart of the monoamine theory. Whatever it is that Prozac and the other members of the widely used class of drugs called selective serotonin reuptake inhibitors (SSRIs) do to change brain chemistry, it happens almost immediately after they are ingested. The neurochemical changes are quick. However, SSRIs typically take weeks, even months, to have any therapeutic influence. Why the delay? No one had any explanation until the late 1990s, when Ronald Duman, a researcher at Yale, showed that antidepressants actually grow brain cells in the hippocampus, a part of the brain associated with memory and mood regulation. Such a finding would have been viewed as preposterous even a decade earlier; one of the central dogmas of brain science for more than a century has been that the adult brain is incapable of producing new neurons. Duman showed that the dogma is false. He believes that the therapeutic effects of SSRIs are delayed because it takes weeks or months to build up a critical mass of the new brain cells sufficient to initiate a healing process in the brain.

While Duman’s explanation for the mechanism of action of the SSRIs remains controversial, a consensus is building that SSRIs most likely initiate a series of complex changes, involving many neurotransmitters, that alter the functioning of the brain at the cellular and molecular levels. It appears that SSRIs may only be the necessary first step of a “cascade” of brain changes that occur long after and well “downstream” of serotonin alterations. The frustrating truth is that depression, like all mental illnesses, is an incredibly complicated and poorly understood disease, involving many neurotransmitters, many genes, and an intricate, infinite, dialectical dance between experience and biology. One of the leading serotonin researchers, Jeffrey Meyer of the University of Toronto, summed up the misplaced logic of the monoamine hypothesis: “There is a common misunderstanding that serotonin is low during clinical depression. It mostly comes from the fact that many antidepressants raise serotonin. This is a bit like saying pneumonia is an illness of low antibiotics because we treat pneumonia with antibiotics.”

The flimsiness of the entire enterprise was brought home to me in devastating fashion in a conversation with Elliot Valenstein, a leading neuroscientist at the University of Michigan, and the author of three highly regarded and influential books on psychopharmacology and the history of psychiatry. I was talking to Valenstein about why today’s psychiatric drugs address only a very small proportion of the neurotransmitters that are thought to exist. Virtually all these drugs deal with only four neurotransmitters: dopamine and serotonin, most commonly, and also norepinephrine and GABA (technically known as gamma-aminobutyric acid). While no one knows exactly how many neurotransmitters there are in the human brain—indeed, even how a neurotransmitter is defined exactly can be a matter of debate—there are at least 100.

So I asked Valenstein, “Why do all the drugs deal with the same brain chemicals? Is it because those four neurotransmitters are the ones understood to be most implicated with mood and thought regulation—that is, the stuff of psychiatric disorders?”

“It’s entirely a historical accident,” he said. “The first psychiatric drugs were stumbled upon in the dark, completely serendipitously. No one, least of all the people who discovered them, had any idea how they worked. It was only later that the science caught up and provided evidence that those drugs influence those particular neurotransmitters. After that, all subsequent drugs were ‘copycats’ of the originals—and all of them regulated only those same four neurotransmitters. There have not been any new radically different paradigms of drug action that have been developed.” Indeed, while 100 drugs have been designed to treat schizophrenia, all of them resemble the original, Thorazine, in their mechanism of action. “So,” I asked Valenstein, “if the first drugs that were discovered had dealt with a different group of neurotransmitters, then all the drugs in use today would involve an entirely different set of neurotransmitters?”

“Yes,” he said.

“In other words, there are more than a hundred neurotransmitters, some of which could have vital impact on psychiatric syndromes, yet to be explored?” I asked.

“Absolutely,” Valenstein said. “It’s all completely arbitrary.”

The irony is that the shift to drug-oriented treatments has occurred even as the techniques of psychotherapy have improved dramatically. The old one-size-fits-all approach of long-term, fairly unstructured, verbally oriented psychoanalysis or dynamic psychotherapy has been replaced by a number of new approaches specifically geared toward particular kinds of patients.

Traditional therapies can work well for highly verbal “worried well” patients with a fair degree of insight into their problems and motivation to do something about them. But such therapies clearly don’t work for many other people. Among the new, more tailored approaches developed during the past 20 years is cognitivebehavioral therapy (CBT), which gives patients the tools to examine the thoughts, feelings, and beliefs that lie behind their behavior, and develops the skills they need to enact change at a practical level. CBT has often been shown to be as effective as drugs in treating mild to moderate depression, with a significantly lower recurrence rate. It has also been used effectively to treat a broad variety of conditions, including bulimia, hypochondriasis, obsessive-compulsive disorder, substance abuse, and post-traumatic stress disorder, and it has even emerged as a means of reducing criminal behavior.

Two other innovative treatment approaches—the Stages of Change model and Motivational Interviewing—have helped caregivers understand how to motivate (and help) people to change. These methods’ tenets, in a nutshell, are that change should be viewed as a cyclical rather than linear process; that the job of bringing about change is the responsibility of the patient, not the caregiver (a reversal of the centuries-old hierarchical construct of the doctor-patient relationship); and that the caregiver’s approach must vary according to the client’s “stage of change”—that is, the patient’s level of insight and motivation to move forward. The positive outcomes of these kinds of “psychosocial” approaches in addressing some of the most difficult human problems—including addiction and the resistance of people with mental and other illnesses to being drawn into treatment—have been shown repeatedly.

These and other verbally oriented treatments are increasingly used by mental health professionals, but they have less appeal in the citadels of modern psychiatric thought. There, the biological model has triumphed, and not only because of the glittering promise it holds. Biopsychiatry is driven by a complex network of forces, not the least of which are the allure of treating patients expeditiously with drugs rather than time-consuming and sometimes-messy therapies, and the huge profits to be reaped from antidepressants, antipsychotics, and other psychoactive drugs. For patients, however, the benefits of the new paradigm are not nearly so unambiguous. By focusing so heavily on drugs—though they can be highly effective, particularly for severe conditions—we are neglecting to expose patients to the full array of treatments and approaches that can help them get better.

If there’s any lesson to be gleaned from the recent history of psychiatry, it is, in the anthropologist Tanya Luhrmann’s words, “how complex mental illness is, how difficult to treat, and how, in the face of this complexity, people cling to coherent explanations like poor swimmers to a raft.”

We don’t know much, but we should know just enough to recognize how primitive and crude our understanding of psychiatric drugs is, and how limited our understanding of the biology of mental disorder. The unfortunate fact remains that the ills of this world have a tantalizing way of eluding simple explanation. Our only hope is to be resolute and careful, not faddish, in assessing new developments as they arise, and to adopt them judiciously within a tradition of a gradually but steadily growing arsenal in the fight against genuine human suffering.

* * *

Charles Barber worked with the homeless mentally ill in New York City for 10 years. He is a lecturer in psychiatry at Yale University and the author of Songs From the Black Chair: A Memoir of Mental Interiors. This essay is adapted from his new book, Comfortably Numb: How Psychiatry Is Medicating a Nation.

Photo courtesy of Flickr/MR McGill